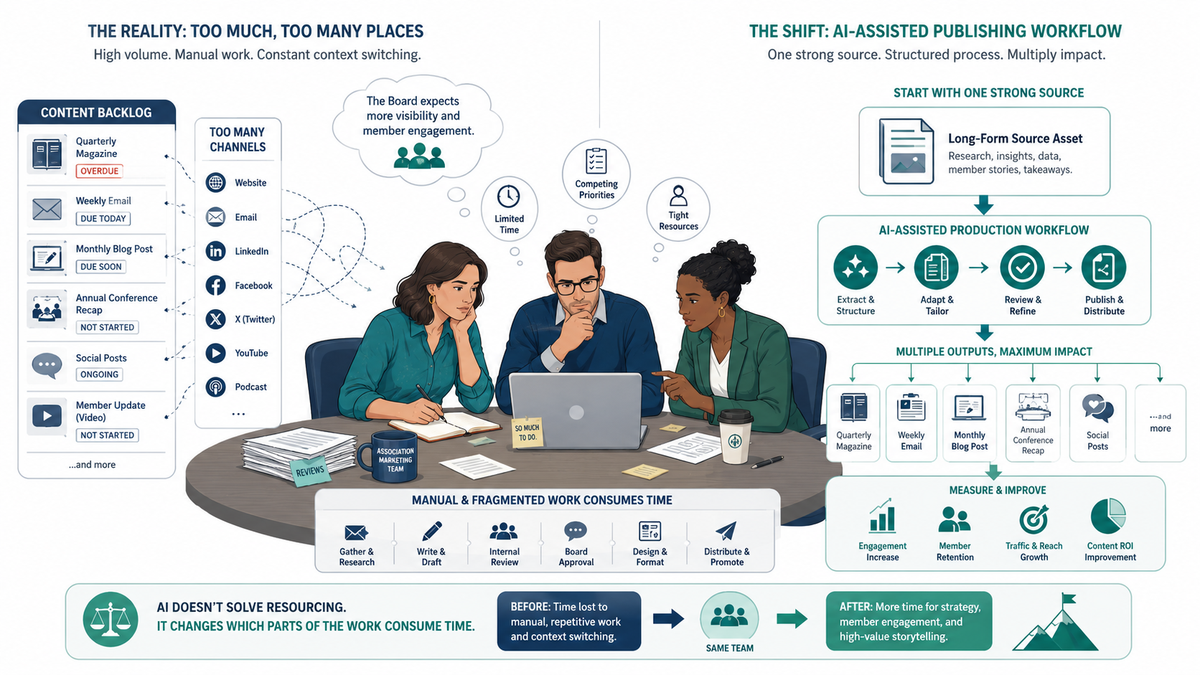

Your association has a 2-4 person marketing team, a publication schedule built for a staff twice that size, and a board that still expects the quarterly magazine, the weekly email, the monthly blog post, and the annual conference recap. AI does not solve the resourcing problem. However, it changes which parts of the work eat your time. Here is what AI publishing for associations looks like in practice.

The content backlog is not a discipline problem

The Nonprofit AI Adoption Report 2026 (346 nonprofits surveyed in late 2025) found that 92% of organizations now use AI in some capacity. Yet only seven percent report major strategic impact. The gap between those two numbers is where your backlog lives.

The reason is structural. Eighty-one percent of organizations use AI individually and on an ad hoc basis. Only 4% have documented, repeatable workflows. In practice, AI becomes another individual habit layered onto a team that already has too many individual habits propping up a broken process. The backlog does not clear. It just gets maintained by different tools.

The problem your team faces is not a creativity problem. One person writing across four channels — email, blog, LinkedIn, member magazine — is a production problem. Production problems do not get solved by giving people better writing tools. They get solved by redesigning the workflow.

One person writing across four channels is a production problem. AI is not a writing tool. It is a production tool. Treat it like one.

What happens when associations skip workflow design and go straight to the tool: content quality becomes dependent on whoever is willing to use the tool that week. When that person goes on leave, the content calendar goes with them. ASAE’s Communications Professionals Advisory Council found that only 25% of associations have a formal AI policy. The other 75% are running on individual heroics and hoping the institutional knowledge doesn’t walk out the door.

An association with 12,000 members sending a quarterly newsletter has made a structural claim: those 12,000 people’s attention can wait 90 days. That was a reasonable position when producing a newsletter required a week of editorial work. AI changes what that waiting costs, because the alternative is now closer than it used to be. The question is whether you have a workflow to get there, or whether you are still optimizing the heroics.

This is where AI for associations becomes an operational question, not an experimental one.

AI publishing for associations: how to build a workflow that actually holds

Most associations reach for an AI tool first. That is the wrong order. The tool is the last decision, not the first.

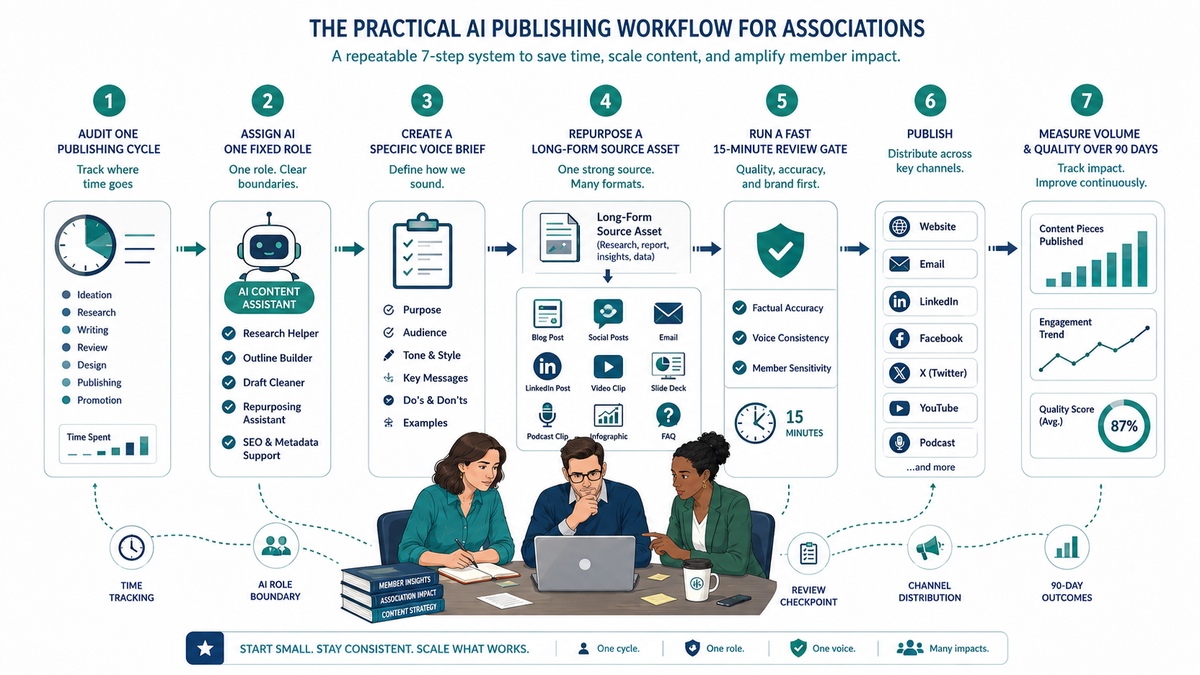

Audit where your time actually goes before you touch any AI tool

The most common assumption is that writing is where the time goes. It is usually not. In my experience auditing content operations for association marketing teams, 40–60% of a content person’s time lives in tasks that have nothing to do with writing: source-hunting, format conversion, platform adaptation, approval routing, calendar management.

If you optimize the writing step and leave the rest unchanged, you get faster drafts that sit in the same approval bottlenecks. As a result, the bottleneck moves earlier in the process, and the efficiency gain disappears.

The fix is simple and uncomfortable: track one complete publishing cycle in real time. Not estimated time. Actual time. Every task. Every handoff. Every revision request. Note who touches it and how long each step takes. Separate the creation tasks from the coordination tasks.

That audit tells you where AI belongs in your workflow. Without it, you are guessing.

Give AI a fixed role in your workflow — not an optional one

Optional AI use produces inconsistent results. This is not a motivation problem. It is a systems problem. When AI is a suggestion — something you use when you have time — it does not get used consistently enough to produce predictable output. Predictable output is what makes review efficient. Inconsistent output is what makes review feel like rewriting from scratch.

Pick one task AI will perform on every publishing cycle without exception. One. Not “use AI whenever it makes sense.” A specific, named task that runs every time.

The first fixed AI role I recommend to lean association teams is repurposing. Take the long-form asset that was already expensive to produce — the conference keynote transcript, the annual report findings, the member survey — and turn it into three shorter assets. This extends the value of work already done without adding a second creation cycle.

That is not a creative task. It is a production task. It is exactly where AI operates reliably.

Write a voice brief your AI tool can actually follow

AI-generated content that does not sound like your association is not content. It is a liability. A member who reads three pieces that sound nothing like each other — and nothing like the organization they joined — is receiving a signal about organizational coherence, whether you intended to send it or not.

The fix is upstream. Before using AI for any member-facing output, write a voice brief specific enough to become a prompt input.

A usable voice brief includes: your organization’s name and mission in one sentence; three things you always say; three things you never say; two sample paragraphs of approved content; and the relationship framing — do you speak to members as peers, as constituents, as practitioners?

If your brand guide says “warm, professional, and member-focused,” that is not a voice brief. That is a mood board. If it says “we do not use exclamation points except in event announcements and we never use the phrase ‘exciting opportunity,’” that is a voice brief. AI can follow the second one.

If your brand guide says “warm, professional, and member-focused,” that is not a voice brief. AI cannot follow a mood board.

Associations with existing digital brand work already have the raw materials for this brief. The clarity required for good AI output is the same clarity required for consistent web copy. If you have invested in that clarity, the voice brief takes an afternoon. If you have not, it surfaces a gap worth fixing regardless of whether you use AI.

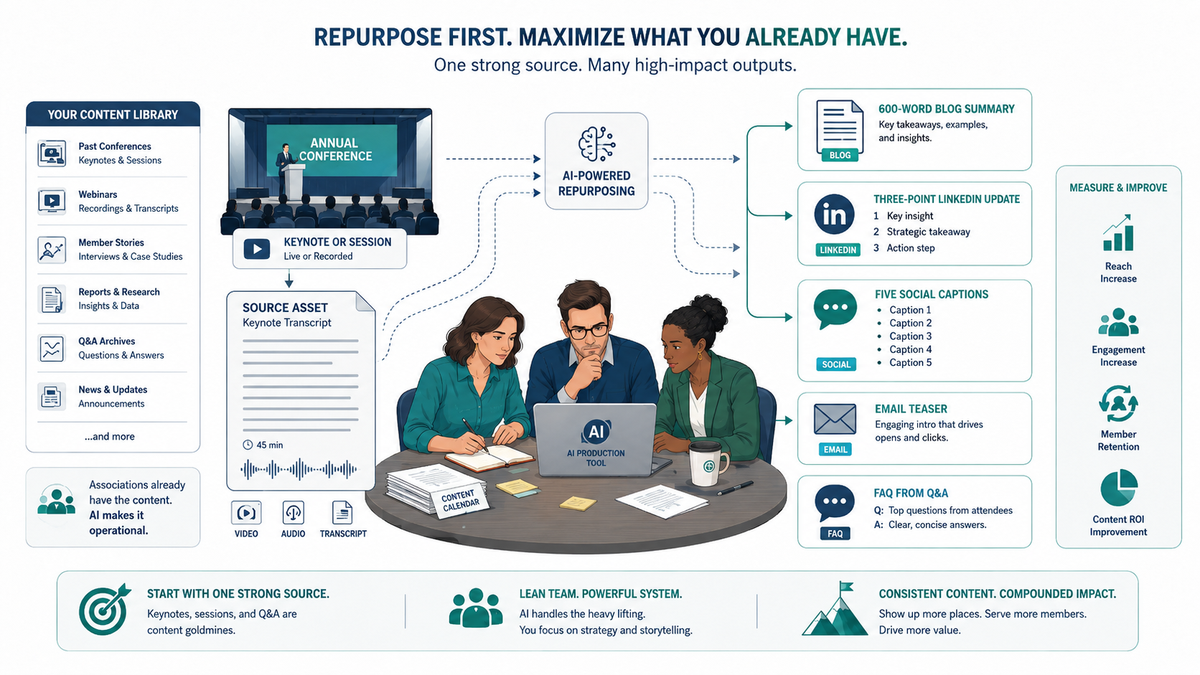

Use AI for repurposing first — original creation second

Original creation is where AI underdelivers most visibly. The output is grammatically correct and structurally coherent, yet it sounds like nothing your members would recognize as coming from you. Repurposing, however, is where AI over-delivers most consistently. Start where the return is clear.

Identify your existing highest-value asset. For most associations, that is the annual conference. A one-hour session recording contains a blog post, a LinkedIn article, a newsletter item, and a FAQ page. Most associations get a slide deck and a thank-you email.

The COPE model — Create Once, Publish Everywhere — is a publishing-industry principle that associations consistently under-apply. AI makes it operationally viable for a team of three. Take the conference keynote transcript and run it through a structured AI prompt that outputs: a 600-word blog post summary, a three-point LinkedIn update, five social captions, an email teaser, and a FAQ extraction from the Q&A section. That is a half-day of work done in 30 minutes.

The mistake is using AI to write net-new content before exhausting the existing content library. Most associations have years of conference sessions, member interviews, and committee reports sitting unrepurposed. That library is the first mine.

Build a review gate — not an approval bottleneck

AI output needs review. Every piece. The failure mode is building a review process that takes as long as writing the content from scratch. When that happens, the efficiency gain disappears and the team concludes AI does not work for them. The problem is not AI. The problem is that the review process was designed for the wrong input.

Set a 15-minute review standard for AI-assisted content. Review for three things: factual accuracy, voice consistency, and member-sensitivity. Check factual accuracy because AI hallucinates — it will invent statistics, cite studies that do not exist, and attribute quotes to real people who never said them. Check voice consistency because drift accumulates. Check member-sensitivity because a single misreading at renewal time costs more than a month of content velocity gains.

That is the list. If a review is taking longer than 15 minutes, one of two things is true: the voice brief is missing something, or the task is not suited to AI.

The associations I have seen get this right separated two distinct decisions that most teams collapse into one. The content director reviews AI output for accuracy in 15 minutes. Publication approval — timing, channel, sequencing — is a separate decision that does not require rewriting the content. When both decisions go to the same meeting, both decisions slow down.

Measure what changes — not just what gets produced

Most AI publishing conversations stop at output volume. Posts per month. Emails per quarter. These are the wrong primary metrics.

The right question is whether publishing cadence holds without staff burnout, and whether content quality — measured by engagement — holds or improves. Volume without engagement means you are burning member attention faster than you are building it. That is the opposite of what a sustainable content operation looks like.

Before you start, pick two metrics: one volume metric and one quality metric. Open rate for the email. Click-through for the blog. Member response rate for the survey. Measure both for 90 days. If volume is up and quality is flat, the workflow needs a stronger review gate. If both are up, you have built something worth documenting.

According to Averi AI’s 2026 study of nonprofit AI workflows, teams that formalized their AI processes reported saving 15–25 hours per week. The teams that saved those hours are not the ones who found the best AI tool. They are the ones who redesigned the workflow first.

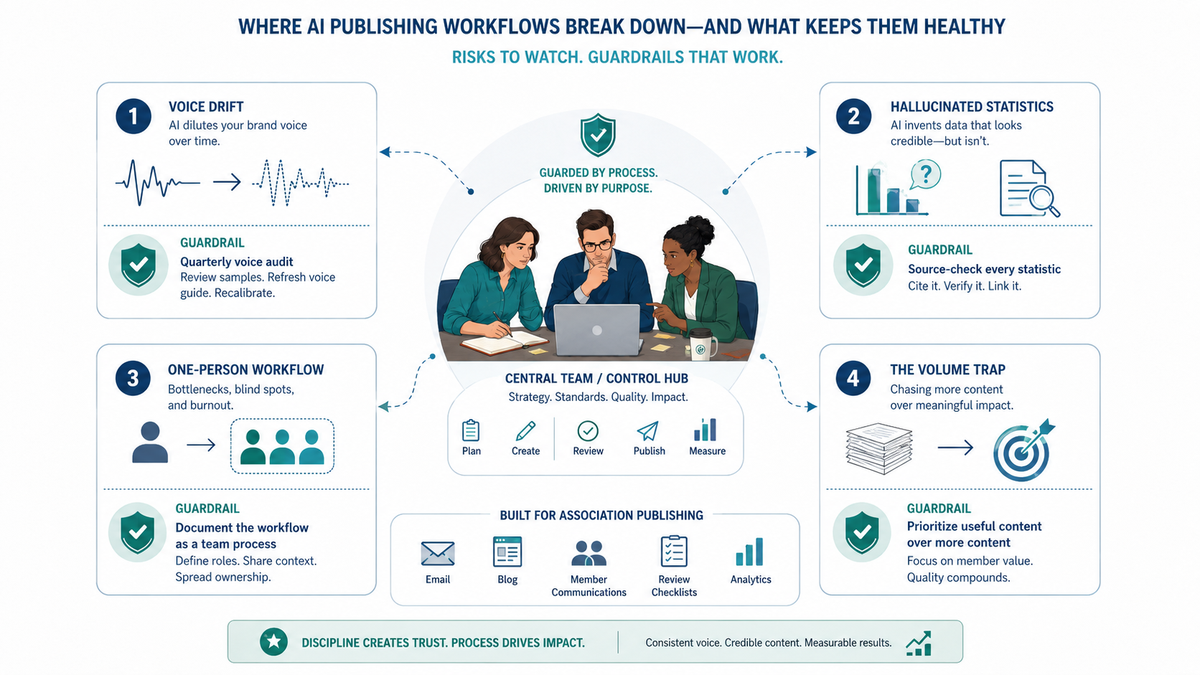

Where AI publishing workflows break down

Building a workflow is the first half. Maintaining it is where most associations lose the gains they built.

Voice drift is the first failure mode. AI output gradually moves your tone toward a generic institutional register. It happens incrementally: one piece is slightly more formal, the next slightly more passive, the one after that sounds like a press release. By month three, a member reading your content could not tell it came from you. The fix is a quarterly voice audit. Read three recent pieces side by side and ask whether a member who knows the organization could identify the source. If the answer is no, the voice brief needs updating and the last quarter of AI output needs a review pass.

Statistics hallucination is the second failure mode. AI will invent statistics with the same confidence it reports real ones. Over 70% of marketers reported encountering AI-related incidents — hallucinations, bias, and off-brand content — in the IAB State of Data 2025 report. Every AI-generated statistic needs a source check. Every single one. The reason is not that AI lies. The reason is that AI does not know the difference between a claim it generated and a claim it retrieved. You do. Act accordingly.

The one-person workflow is the third failure mode. If the AI workflow lives in one person’s head and that person leaves, the workflow leaves with them. This is the same fragility that produces the content backlog in the first place: knowledge concentrated in individuals rather than documented in systems. Document the AI workflow as a team process — the prompts, the review criteria, the publishing checklist. If it is not written down, it is not a workflow. It is a habit.

The volume trap is the fourth failure mode. AI makes it easy to publish more. More is not better. Associations that publish more without strategy see no corresponding engagement lift. Instead, they see a gradual decline in open rates as members learn that more content does not mean more useful content. The difference between AI content operations and AI content volume is the discipline to ask whether each piece earns its place in a member’s inbox before it ships.

Frequently Asked Questions

How do small association teams start using AI for content without getting overwhelmed?

Start with one task, not a platform. Pick a specific, recurring content job — social adaptations of blog posts, summary paragraphs for email headers, FAQ extraction from session recordings — and use AI for that task on every publishing cycle. Do not try to replace your full content workflow at once. The teams that get overwhelmed are the ones that try to change everything simultaneously.

What is the best AI tool for association content publishing?

The tool matters less than the workflow it sits inside. ChatGPT, Claude, and Gemini all produce workable output when given a well-structured voice brief and a specific task. ASAE’s marketing survey cites Writer and Gemini as tools associations are using at scale. For conference content specifically, Maestra.ai handles transcription and captions. For personalized newsletters, rasa.io builds member-specific content feeds. Start with the task, then find the tool that handles it.

How do I make sure AI-generated content sounds like my association and not a generic chatbot?

Write a voice brief before you write a single prompt. The voice brief is not a brand guide — it is a specific, prompt-ready document: what you always say, what you never say, two sample paragraphs of approved content, and how you address members. AI output sounds generic when the input is generic. Specificity in the brief produces specificity in the output.

Can AI really replace a content writer for an association?

No. AI replaces specific production tasks — format conversion, repurposing, social adaptation, FAQ extraction. It does not replace the judgment about what is worth saying, the institutional knowledge about what members actually care about, or the relationship credibility that makes a member open your email. The organizations that get the most from AI are the ones that are clearest about what it cannot do.

How do associations handle fact-checking AI-generated content?

Build fact-checking into the review gate as a non-negotiable step, not an afterthought. Focus the check on statistics, study citations, and attributed quotes — these are the three categories where AI hallucinates most consistently. If a statistic appears in AI output without a source, search for the source before publishing. If you cannot find it, remove the statistic. An unsourced claim in member-facing content costs more credibility than the line is worth.

What types of association content work best with AI?

Repurposing tasks consistently outperform original creation tasks. Conference session summaries, social adaptations of existing articles, FAQ extractions from long-form content, email teasers for published pieces — these are where AI delivers reliably. Net-new thought leadership, member spotlights, and advocacy communications require too much institutional context and relationship judgment to delegate to AI without heavy editing.

How long does it take to see results from an AI publishing workflow?

The volume results show up within the first publishing cycle. The quality results take 90 days to assess honestly. Set a volume metric and a quality metric before you start — open rate, click-through, member response — and measure both. If volume improves but quality does not, the workflow needs a stronger review gate, not more AI.

If you want this mapped to your specific publishing stack and team size — the tasks, the tools, the review gates, and the metrics — that is what an AI Readiness Audit covers. Schedule one at /contact/.