AI content operations is the system that connects AI tools into a repeatable, governed workflow for planning, creating, and distributing content. It is not a tool. It is the infrastructure around your tools. For associations running lean marketing teams, it means your staff spends time on judgment and member relationships. Not on manually moving content between disconnected platforms.

What AI content operations actually means in practice

Most associations have already answered the question: should we use AI for content? The answer was yes, probably two years ago. Yet the question most teams are still trying to answer is this: why does it feel like they are working just as hard, producing more content, and trusting it less?

In most cases, the answer is the same. They adopted tools without building a system.

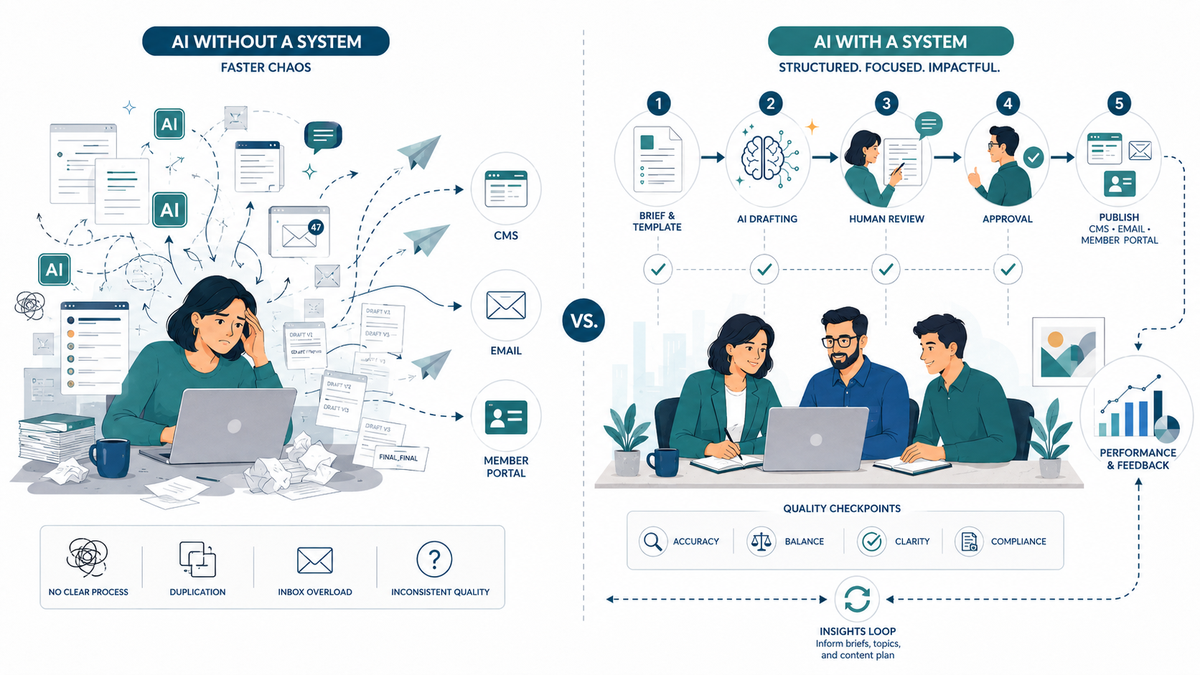

Content strategy is the what and the why: what you’re publishing, who it’s for, what it’s supposed to do. Content operations is the how — the workflows, the roles, the checkpoints, and the standards that turn strategy into published content consistently. When you add AI tools to a content operation without those things, you don’t get a system. You get faster chaos.

When you add AI tools to a content operation that doesn’t have those things, you don’t get a system. You get faster chaos.

AI content operations — the actual practice, not the vendor pitch — is about building that infrastructure deliberately. Specifically, it means knowing who writes the brief and what the brief contains. It means knowing who reviews the output, what the quality standard is, and what happens when the output is off-voice. It also means knowing whether the published piece did what it was supposed to do.

That last part matters more than most teams realize. In fact, the Averi.ai 2026 State of Content Workflows report found that content updated with structured headings and FAQ sections sees 2.8 times more AI citations. Content ops is not just about production speed. It is about building content that earns authority over time.

A real AI content operations system has five components. First, documented prompt templates for each content type. Second, defined workflow stages with explicit human checkpoints. Third, governance rules for who owns what and what the quality bar is. Fourth, an approval process with an actual SLA. Fifth, a performance feedback loop that routes what you learn back into your prompts and briefs. Those five things are what separate an AI content operation from a stack of AI subscriptions.

Why associations have a content operations problem AI can actually solve

The structural constraints associations face are not the same ones a startup marketing team faces. Your AMS does not talk to your CMS. Your advocacy calendar has deadlines that cannot slip. A renewal cycle generates a content spike twice a year. Your member communications expectations were set before you were in this role. And in most cases, one or two people are responsible for all of it.

According to ASAE’s State of Associations report, 88% of associations now use AI for content creation. That adoption rate, however, has not translated into readiness. The tools are in the building. The system for using them is not.

I’ve seen this failure mode often enough that I can describe it precisely. The marketing director subscribes to an AI writing tool. They use it to draft the member newsletter faster. The newsletter goes out sounding slightly generic, slightly off-brand. The deadline was met, so nobody says anything. Three months later, the board asks why member open rates dropped. Nobody connects it to the newsletter voice change. That is because nobody documented that the process changed.

That is a content operations failure. The AI tool worked as advertised. The system around it didn’t exist.

For the associations that get this right, the picture is different. A two-person marketing team with a working AI strategy for associations can produce conference previews, advocacy alerts, chapter communications, and renewal content. They do it without burning out. They’ve built prompts that encode their voice, checkpoints that catch errors before publish, and a governance model that distributes review rather than concentrating it.

McKinsey’s 2026 marketing workflows research found that nearly 90% of CMOs are experimenting with AI. However, fewer than 10% have captured value across end-to-end workflows. Associations are not unusual in this. They are running the same failure mode with smaller teams and less margin for error.

The four components that make an AI content system work

Building an AI content operation is not a technology project. You do not need new software. Instead, you need to answer four questions before you open any AI tool — then build a simple system around the answers.

Documented prompt templates

A prompt template is not “write a newsletter about our annual conference.” Instead, it is a structured brief that contains the audience, the required information, and the voice standard. It also defines the output format and the specific things the AI should not do. You need one for each recurring content type: member spotlight, advocacy alert, event recap, renewal email, chapter update.

The discipline here is that the template encodes what your team already knows about your voice and your members. When a new staff member runs a conference recap through the template, the output sounds like your association. Not because AI is magic, but because someone wrote down what that means.

Workflow stages with human checkpoints

AI drafts. Humans review. That is the minimum viable workflow, and most teams do it informally. However, the difference between informal and operational comes down to explicitness. An operational workflow specifies what the human checks: brand voice consistency, factual accuracy, member-specific context the AI couldn’t know, and approval status.

The pattern holds across content operations platforms: structured workflows that define where AI stops and human judgment starts reduce rework and minimize confusion. The teams that skip this step produce content faster and spend more time fixing it.

Content governance

Governance is the word that makes marketing directors’ eyes glaze over, so let me be specific. At the association level, it means three things. First: who owns each content type — not “everyone contributes” but one person who is accountable. Second: what the quality standard is for each type — not “it should be good” but what it must specifically contain and avoid. Third: what the review SLA is — not “when someone has time” but a real deadline the workflow enforces.

Without governance, AI content operations produces the approval bottleneck that kills most teams’ momentum. Everyone is technically reviewing. Nobody is actually accountable. Content sits in draft.

Performance feedback loop

The fourth component is the one most teams skip entirely because it feels like extra work. It is not extra work. It is what prevents the system from degrading over time.

Connecting your content performance data back to your brief templates and prompt designs is what makes an AI content operation compound rather than plateau. That data includes open rates, click-throughs, page engagement, and member portal activity. When a renewal email format consistently outperforms others, that format becomes the template. When a conference recap structure drives member portal logins, that structure gets documented and repeated. The association digital infrastructure that supports this feedback loop is part of what makes content ops sustainable.

Connecting your content performance data back to your brief templates and prompt designs is what makes an AI content operation compound rather than plateau.

What people get wrong when they hear “AI content operations”

Three misconceptions come up consistently when I talk to association marketing directors about this.

Misconception 1: It is a product you buy

The first misconception is that AI content operations is a software product you buy. It is not. No single platform is AI content operations. Platforms can support a content operation. However, the operation itself is a documented set of practices — role-assigned, enforced, and built around whatever tools you already have. In practice, an association with a content operations discipline and ChatGPT will outperform an association with a $50,000 platform and no operational structure. The research bears this out. McKinsey found that fewer than 10% of CMOs have captured end-to-end value from AI. The gap is not tool quality. It is operational infrastructure.

Misconception 2: It replaces your team

The second misconception is that content operations means replacing your team with AI. It means the opposite. The governance model, the quality checkpoints, the voice standards, the member context — all of that is human work. In practice, AI handles the drafting, the formatting, and the first pass. The judgment about whether something sounds like your association and serves your members is yours. An AI content operation that removes human judgment from member-facing content is not an operation. It is a liability.

Misconception 3: It is only for large organizations

The third misconception is that AI content operations is only for large organizations with dedicated operations staff. In reality, I work with associations that have one marketing director and a part-time coordinator. They can build and run an effective AI content operation. It does not require an operations manager. It requires documentation and discipline — neither of which scales with headcount.

The real failure mode is not adopting too much AI. It is publishing raw AI output without editorial review, and writing vague prompts that produce generic content. Both of those are operations problems, not tool problems.

Frequently Asked Questions

What is the difference between AI content operations and using AI tools for content?

Using AI tools for content means opening ChatGPT and drafting something. AI content operations, by contrast, is the system around that action: the prompt templates that encode your voice, the workflow that defines who reviews the output, the governance that sets the quality bar, and the performance loop that improves the system over time. Tools are discrete instruments. Operations is the infrastructure that connects them.

How do associations with small marketing teams get started with AI content operations?

Start with one content type: the one your team produces most often and finds most time-consuming. For most associations, that is the member newsletter or event recap. Document the prompt you use to draft it, define who reviews it and what they check for, and publish one piece using that process. Refine the prompt based on what the review caught. Repeat. You have an AI content operation for that content type. Expand from there.

Does AI content operations require a dedicated operations role or special software?

No to both. A dedicated operations role helps at scale, but it is not a prerequisite. The minimum viable version is a shared document with prompt templates, a simple workflow checklist, and a named reviewer for each content type. The software you already use — your CMS, your email platform, a free AI writing tool — is sufficient to start.

What types of association content benefit most from an AI content operations system?

Recurring, structured content types benefit most: renewal emails, conference previews, event recaps, advocacy alerts, chapter communications, member spotlights. These are the content types where the structure is consistent enough to template and the volume is high enough that the efficiency gain is real. One-off strategic documents and board communications benefit less from AI drafting and more from human attention.

How does AI content operations handle brand voice and member-specific context?

Brand voice lives in the prompt template. When you document your voice standards — the register, the tone, what you never say, what you always say about your members — and encode them into your prompt templates, the AI output reflects them consistently. Member-specific context is handled at the human checkpoint: the reviewer adds what the AI couldn’t know. The system does not replace member knowledge. It frees up the time to apply it.

Is AI-generated association content compliant with ASAE content standards?

ASAE does not publish a prescriptive AI content standard as of this writing. The more useful question is whether your AI-generated content maintains the accuracy, member-specificity, and trust that your members expect. That is an operations question, not a compliance question. It is answered by your review checkpoints, your governance model, and your quality bar. Not by what tool produced the first draft.

How long does it take to build an AI content operations workflow?

A working workflow for one content type takes a day to build and a month to stabilize. You write the prompt template, run three to five pieces through it, refine based on review feedback, and then it runs. The governance layer — who owns what, what the quality bar is, what the review SLA is — takes a planning meeting to define and a quarter to make habitual. Expect six months before the system feels like infrastructure rather than process.

What is the biggest mistake associations make when adopting AI for content?

Adopting tools before building the system. The mistake is not using AI — 88% of associations are already there. The mistake is adding AI drafting capacity to a content operation that does not have documented voice standards, defined review checkpoints, or a governance model. That combination produces faster, higher-volume content that sounds slightly wrong and erodes member trust incrementally. The fix is to slow down before you scale up: build the system before you run it.

If your association is already using AI for content and something feels off — the volume is up, the trust is down, the team is still tired — the problem is probably the system, not the tools. An AI Readiness Audit is how I diagnose that. We look at what you’re producing, how you’re producing it, where the checkpoints are missing, and what it would take to build a content operation that actually compounds. Schedule an AI Readiness Audit if you want to know where your system stands.